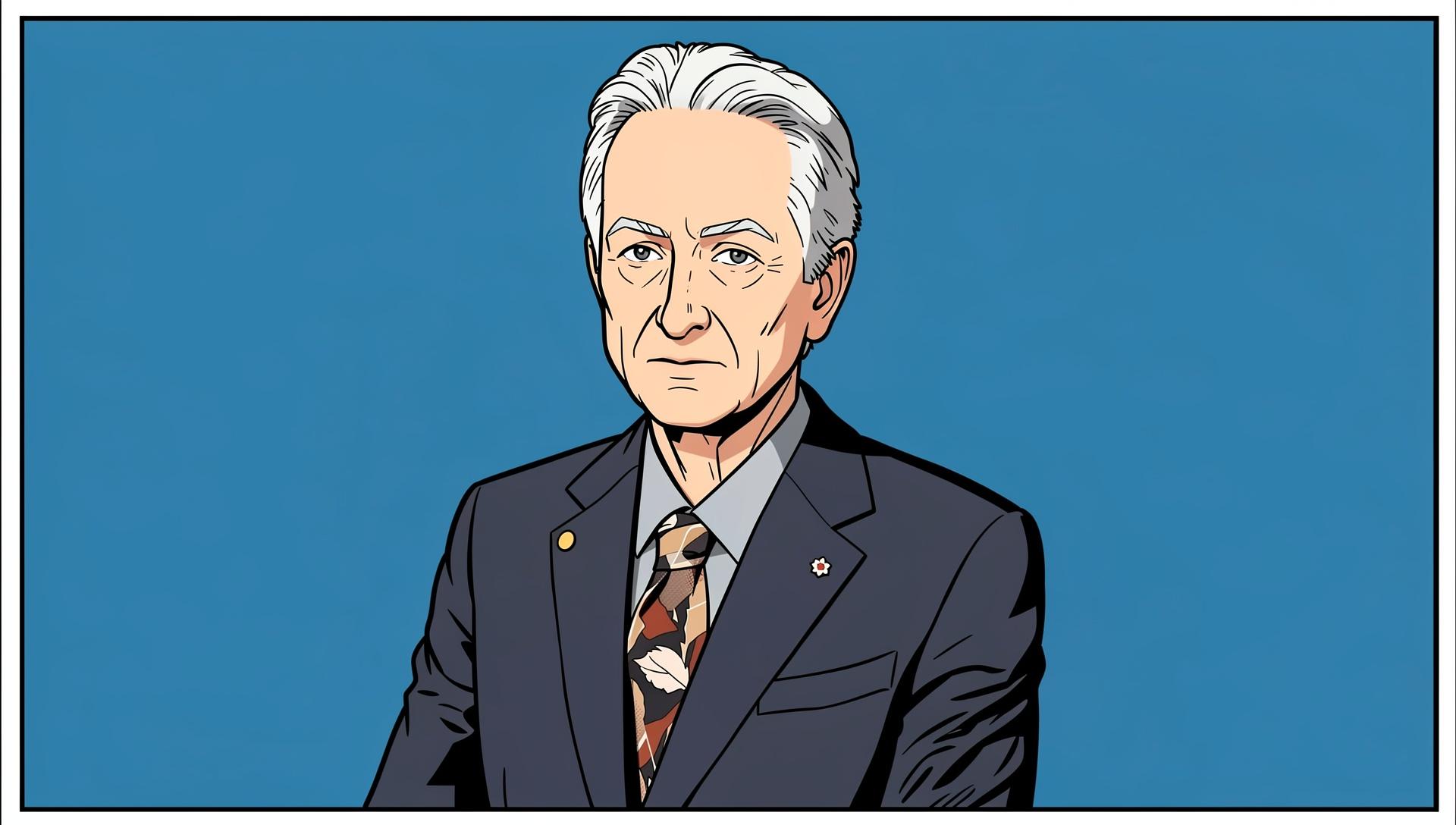

Geoffrey Hinton

Emeritus Professor; Former VP and Engineering Fellow

University of Toronto; Google

Geoffrey Hinton is the figure most responsible for the deep learning revolution that produced modern AI. Working with David Rumelhart and Ronald Williams, he published the 1986 paper "Learning Representations by Back-propagating Errors" in Nature, which demonstrated that backpropagation could train multi-layer neural networks — an approach that had been largely abandoned. For two decades, Hinton continued working on neural networks when most of the machine learning community had moved to support vector machines and probabilistic graphical models.

The turnaround came with AlexNet in 2012. Hinton, along with students Alex Krizhevsky and Ilya Sutskever, entered the ImageNet visual recognition challenge with a deep convolutional network trained on GPUs. AlexNet won by a margin that halved the previous year's error rate. The result redirected the field. Within three years, deep learning was the dominant paradigm in computer vision, speech recognition, and natural language processing.

Hinton was awarded the 2018 Turing Award alongside Yann LeCun and Yoshua Bengio for their foundational contributions to deep learning. In 2024 he shared the Nobel Prize in Physics with John Hopfield for foundational work on artificial neural networks — an unusual recognition of computer science contributions through a physics prize.

In May 2023, Hinton resigned from Google, stating that he wanted to speak freely about the risks of AI without being constrained by employment obligations. He has since been a consistent voice warning about the possibility of AI systems developing goals misaligned with human interests — what he calls a potential existential risk. His position is notable precisely because of his technical standing: he is not a philosopher or a regulator, he is the person who built much of what he is now warning about.